By Jack Pouchet, Vice President of Business Development, Global Solutions, at Vertiv

will seamlessly integrate core facilities with a more intelligent, mission-critical edge of network, moving beyond the distributed data center toward a powerful, connected system on the verge of a software-defined future. These data centers eventually will become the principal model for IT networks of the 2020s, and their emergence is one of five data center trends expected to reshape the industry in 2018 and beyond.

The evolution of the edge is driving many of these trends. As computing moves closer to the end user and assumes a more significant role in supporting and providing network services, the nature of edge facilities and the infrastructure systems needed to support them is changing. These are foundational shifts, on par with the introduction of the cloud and the ensuing aftershocks. The cloud changed everything, and continues to, but in ways that were impossible to predict. We should expect the same as the industry migrates to the edge. The only thing we know for sure is that expectations of providers and end users will remain simple and uncompromising – fast, seamless, uninterrupted service whenever and wherever it’s needed.

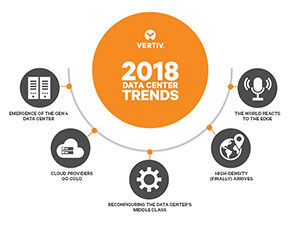

Let’s look at the five trends we expect to impact the data center ecosystem in 2018 and beyond:

1. Emergence of the Core-to-Edge Data Center:

Organizations increasingly are relying on the edge of their networks – an edge that can be anything from a simple IT closet to a 1,500-square-foot micro-data center. A fully realized core-to-edge data center will harmoniously integrate edge and core with innovative architectures allowing hundreds or even thousands of distributed IT nodes to operate in concert.

These networks will add capacity in scalable, economical modules, virtually in real time. They will leverage high-density power supplies, lithium-ion batteries, advanced power distribution units, optimized thermal solutions and advanced monitoring and management technologies (perhaps machine learning or even artificial intelligence) to deliver a sophisticated, flexible network that is more powerful, reliable and efficient than anything we’ve seen to date.

These designs will reduce latency and up-front costs, increase utilization rates, remove complexity to make operation and service easier and more cost-effective, and allow organizations to add network-connected IT capacity as they need it.

2. Cloud Providers Go Colo:

The dirty little secret about cloud providers is that they would rather not be in the data center business. Data centers are expensive, requiring extensive resource allocation, and some cloud providers simply are not equipped to keep up with skyrocketing demand. Most providers would prefer to focus on service delivery, sales and marketing, and other priorities over new data center builds that can take months or even years. Seeking an alternative, more and more cloud providers are turning to colocation providers to meet their capacity demands.

Colo providers are data center experts, with a recipe for building and operating the facilities that deliver efficiency and scalability – not to mention reduced costs and increased peace of mind. Additionally, the global proliferation of colocation facilities allows cloud providers to choose colo partners in locations that match end-user demand, meaning these colo data centers operate more or less like edge facilities. Colo providers are responding to the influx of cloud business by provisioning portions of their data centers specifically for cloud services or even providing entire build-to-suit facilities.

3. Reconfiguring the Data Center’s Middle Class:

3. Reconfiguring the Data Center’s Middle Class:

We all know the fastest growth in the data center market is happening in hyperscale and edge spaces. Much has been written and said about the shrinking share of the in-between facilities, specifically traditional enterprise data centers. That shift is real and isn’t likely to change, but most organizations will not abandon their existing enterprise assets altogether. The opportunity going forward is in reimagining and reconfiguring those assets to support these evolving network architectures.

Data center consolidation will be a big part of this. Already, organizations with multiple data centers are consolidating their internal IT resources into single facilities. Whether that’s an existing data center or a new, smaller, more efficient building typically depends upon the extent of the equipment refresh required. Keep in mind, many of these facilities are 10-20 years old, built at the height of the enterprise data center phase, and already showing their age. In those cases, organizations are likely to transition what they can to the cloud or colocation and downsize to smaller owned IT facilities, likely leveraging prefabricated construction that can be deployed and scale quickly to meet urgent needs.

The new enterprise facility will be smaller, smarter, more efficient and secure, with high levels of availability – consistent with the mission critical nature of the data housed within.

4. High-Density (Finally) Arrives:

I know, I know … we’ve heard this before. True, the data center community has been predicting a spike in rack power densities for a decade, with little actual movement on that front. That’s changing, and it’s happening quickly. Mainstream deployments at 15 kW per rack are no longer uncommon, and some facilities already are deploying 25 kW racks. There are reasons to believe the shift toward higher densities will continue.

To wit: The widespread adoption of hyper-converged computing systems demands high-density solutions. Colos, where space is at a premium, equate high density with high revenues. And then there’s this simple reality: the advances in server and chip technologies that delayed the advent of high-density racks have plateaued. It had to happen eventually.

Still, mainstream movement toward higher densities is likely to be cautious. For one, significantly higher densities require significant changes to a data center’s design – everything from the power infrastructure to thermal management in higher density environments. Whether it’s liquid cooling, chip cooling, submerged servers or high-density air cooling – all of which are at or near real-world maturity – implementation will require significant investment and infrastructure restructuring. It’s coming, but widespread adoption will be slow. Expect to see compartmentalized, purpose-built deployments.

5. The World Reacts to the Edge:

We’ve already discussed the increasing importance of edge computing, but there are considerations related to this move that go beyond the IT and infrastructure equipment. These considerations include the physical and mechanical design, construction and security of edge facilities as well as complicated questions related to data ownership. Governments and regulatory bodies around the world increasingly will be challenged to consider and act on these issues.

The physical security and engineering and construction standards are incredibly complex issues and yet, somehow, the easier of the challenges to address. Europe has been at the forefront, with the European Standard, EN 50600, establishing some baselines related to building construction, power distribution, air conditioning, security systems, and safe management and operation in IT facilities. There still are some gray areas, and it applies only to European nations that recognize the EN standards, but EN 50600 is a start, and the rest of the world is expected to follow suit relatively soon.

The more difficult challenge is tied to data ownership and security. An incomprehensible amount of data is transmitted around the world every second, and businesses and governments rely on virtually instantaneous data analysis for competitive advantages. Moving all that data to the cloud or a core data center and back for analysis is too slow and cumbersome, so more and more data clusters and analytical capabilities sit on the edge – an edge that resides in different cities, states or countries than the home business or agency. The question is: who owns that data, and what are they allowed to do with it?

Again, Europe is at the forefront in addressing these questions. The EU General Data Protection Regulation (GDPR) will require countries to know where data is located and more closely restrict data sharing across borders, simplifying and standardizing data privacy laws across the EU (until Brexit, anyway). But if GDPR is a step toward simplification, the Markets in Financial Instruments Directive (MiFID II) will introduce more complexity – and a considerable challenge for edge facilities. MiFID II, which will go into effect this year, stipulates that organizations providing financial services to clients must record and store all communications leading to a transaction. All of those calls, emails, texts, recorded conversations, and other interactions must be captured and stored, and that will create unprecedented stress on edge locations like bank branches.

Global efforts to better monitor and manage data storage and transmission, especially in edge locations, are just beginning. Initiatives are under way in the U.S. and around the globe, with new guidelines and regulations expected as early as this year.

We are monitoring all of these trends, and more as they will likely impact the design and operation of all IT, network, and data center facilities.

BECOME A MEMBER or LOGIN for Full Access to Member Content and Information.