Data centers have become an essential part of everyday life. With the growth of both their importance and scale, has come concerns over power usage. Looking forward, emerging technology like ubiquitous video on demand, 5G telephone, autonomous vehicles and the smart cities they travel in could make power demand spiral beyond current capacity. Each data center operator needs to design and build with an eye toward both hyper efficiency and a sustainable, greener future. In years past, the concerns for data centers were uptime, resilience and reliability. This was tempered in 2007 with the US EPA’s report to Congress about current industry energy use at 2 percent of the total power available and a projected future where data centers would consume an increasingly significant amount of the US power grid’s capacity. Fortunately, the industry’s response to this has largely kept overall energy consumption stable while increasing network and compute capabilities by several fold.

With this in mind, subsequent reports starting in June 2016 reported a relatively minor increase (73B kWh estimated for 2020 vs 70B kWh in ‘06) in bulk consumption, while the energy in general kept near that same 2% overall, despite a huge increase in mobile devices (initial report was “pre” iPhone) and ubiquitous online connectivity. These gains were made partially by taking advantage of some “low hanging fruit” of energy savings like increasing ambient temperature in white space through the work of ASHRAE TC9.9, the advent and near universal adoption of virtualization, UPS and power electronic efficiency gains, and even using solid state frequency drives to control HVAC fans rather than louvers.

On the IT side, besides virtualization, large users have specified class 80+ (greater than the standard 80% efficiency) power supplies and used single corded servers (vs dual power supply models) to greatly reduce consumption, in addition to using more solid state storage media and more efficient networking devices.

Finally, the advent of the hyperscale era has brought data centers that take advantage of economies of scale, connecting to the grid at transmission level voltages and paying keen attention to locating in more centralized and environmentally friendly locations. Of course, for smaller and strategic locations, operators may not be able to take advantage of all of these strategies. For every data center, every watt counts in order to build a more sustainable future for all. What, then are the short-term, tactical actions that can provide immediate benefit, yielding significant electric utility cost savings?

SUSTAINABILITY PILLARS

Sustainability improvements fit into three main pillars: responsible sourcing, efficient consumption, and lifecycle management. These pillars consider how the power is generated, what assets are consuming that power, and what are the indirect environmental impact of those assets over their lifetime.

Responsible sourcing often means leveraging renewables, primarily done through power purchase agreements (PPA), but data centers are also becoming a more active participant in the grid as well. With the incorporation of renewables in the data center industry, utilities need to offset the impact of adding those variable forms of power to the grid. As the energy demand becomes more dynamic it also becomes more challenging to manage; this impacts grid stability. Though it presents new challenges, advances in battery technology, smart grid functionalities and new business models will provide a path forward to incorporate more sustainable energy into the data center industry. One of the key technologies to look for on the sustainability journey is energy storage. Newer usage of existing technology, replacing diesel with lower carbon impact hydrogen or natural gas fuel cells and chemical storage media like Lithium Ion batteries with their increased power density or emerging chemistry like Natron’s Prussian Blue sodium ion will help shape the sustainable future of data centers.

Responsible sourcing often means leveraging renewables, primarily done through power purchase agreements (PPA), but data centers are also becoming a more active participant in the grid as well. With the incorporation of renewables in the data center industry, utilities need to offset the impact of adding those variable forms of power to the grid. As the energy demand becomes more dynamic it also becomes more challenging to manage; this impacts grid stability. Though it presents new challenges, advances in battery technology, smart grid functionalities and new business models will provide a path forward to incorporate more sustainable energy into the data center industry. One of the key technologies to look for on the sustainability journey is energy storage. Newer usage of existing technology, replacing diesel with lower carbon impact hydrogen or natural gas fuel cells and chemical storage media like Lithium Ion batteries with their increased power density or emerging chemistry like Natron’s Prussian Blue sodium ion will help shape the sustainable future of data centers.

Efficient consumption is driven by leveraging innovative designs and technologies. On the design side, the evolution of data center topologies has really driven sustainability. Availability and reliability are essential for the data center, which means levels of redundancy are required to fully support the critical loads in case of outages or other emergencies. However, having too much redundant equipment is neither cost effective nor sustainable. With the advent of grid connections at or near transmission level voltages, concerns over reliability have been assuaged. With that in mind, data center infrastructure design has become an important place to start driving efficient energy consumption and sustainability. More innovative designs, such as shared redundant or block redundant topologies involve less redundancy while still maintaining high levels of availability, making them more sustainable.

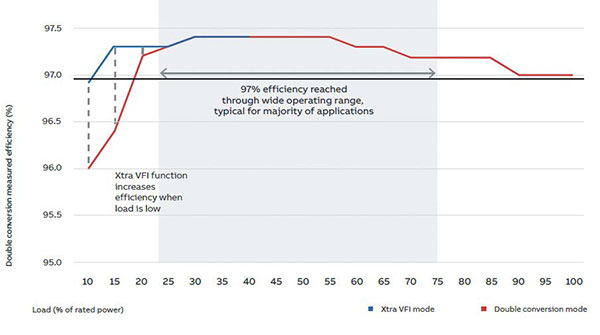

On the technology side, we continue to see incremental improvements to systematically drive our energy waste. As an example, some UPSs use a voltage- and frequency-independent (VFI) double conversion operating mode for an efficiency of up to 97.4 percent. If desired, a current generation UPS can operate in voltage- and frequency-dependent (VFD) ECO mode to attain up to 99 percent efficiency. The older concern of lowered efficiency while operating a UPS significantly under capacity can be mitigated by improved power electronics and the above VFI operating mode. It’s a smart way to minimize losses and improve efficiency safely when running in double conversion mode.

When VFI mode is enabled, the UPS automatically adjusts the number of active modules according to the load power requirements. Modules that are not needed are switched to a standby state of readiness, primed to transfer back to active mode if the load increases. The efficiency improvements achieved by this mode of operation are especially significant when the load is less than 25 percent of full UPS system capacity – a range where UPS systems traditionally fare poorly. The switching scheme parameters can be configured by the user. To increase reliability, extend service life and equalize aging, the system rotates modules between active and standby mode at fixed intervals. Should there be a mains failure or other abnormal situation, all modules revert to active mode within milliseconds.

Lifecycle management is often overlooked but can have a huge impact on the overall sustainability of a system. For example, switching from traditional time-based maintenance to predictive maintenance using condition-based monitoring has many environmental benefits. Leveraging condition-based monitoring triggers maintenance using predictive indicators, rather than a set time interval. Health information is collected from the equipment and system, aggregated, analyzed and compared to historical data to provide advanced warning of degrading equipment performance or impending failure. This optimizes operations, reduces risk of downtime, and eliminates waste and security risk associated with premature or unnecessary maintenance. If this is applied to the motors and drives in a data center’s cooling system for example, it can not only improve the operational efficiency, but also extend the life of the equipment resulting in less waste.

Every watt counts From a cost and stewardship perspective, every watt counts. Taken together, all the incremental improvements mentioned above can add up to a very significant energy reduction impact.

Dave Sterlace is Head of Data Center Technology of ABB. He can be reached at [email protected].

Read Other Articles

(You Must Be Logged In to Continue…)